机器学习-2.Python机器学习软件包Scikit-Learn的学习与运用

EduCoder平台:机器学习—Python机器学习软件包Scikit-Learn的学习与运用第1关:使用scikit-learn导入数据集编程要求:本关任务是,使用 scikit-learn 的datasets模块导入iris数据集,提取前 5 条原数据、前 5 条数据标签及原数据的数组大小。请按照编程要求,补全右侧编辑器Begin-End区间的代码。测试说明:本关的测试文件是step1/tes

EduCoder:机器学习—Python机器学习软件包Scikit-Learn的学习与运用

第1关:使用scikit-learn导入数据集

编程要求:

本关任务是,使用 scikit-learn 的datasets模块导入iris数据集,提取前 5 条原数据、前 5 条数据标签及原数据的数组大小。

请按照编程要求,补全右侧编辑器Begin-End区间的代码。

测试说明:

本关的测试文件是step1/testImportData.py,该代码负责对你的实现代码进行测试,注意step1/testImportData.py 不能被修改,该测试代码具体如下:

代码如下:

from sklearn import datasets

def getIrisData():

'''

导入Iris数据集

返回值:

X - 前5条训练特征数据

y - 前5条训练数据类别

X_shape - 训练特征数据的二维数组大小

'''

#初始化

X = []

y = []

X_shape = ()

# 请在此添加实现代码 #

#********** Begin *********#

iris=datasets.load_iris()

X=iris.data[:5]

y=iris.target[:5]

X_shape=iris.data.shape

#********** End **********#

return X,y,X_shape

第2关:数据预处理 — 标准化

编程要求:

本关任务希望对于 California housing 数据集进行标准化转换。

代码中已通过fetch_california_housing函数加载好了数据集 California housing 数据集包含 8 个特征,分别是

[‘MedInc’, ‘HouseAge’, ‘AveRooms’, ‘AveBedrms’, ‘Population’, ‘AveOccup’, ‘Latitude’, ‘Longitude’],

可通过dataset.feature_names访问数据具体的特征名称,通过在上一关卡的学习,相信大家对于原始数据的查看应该比较熟练了,在这里不过多说明。

本次任务只对 California housing 数据集中的两个特征进行操作,分别是

第 1 个特征 MedInc,其数据服从长尾分布;

第 6 个特征 AveOccup,数据中包含大量离群点。

本关分成为几个子任务:

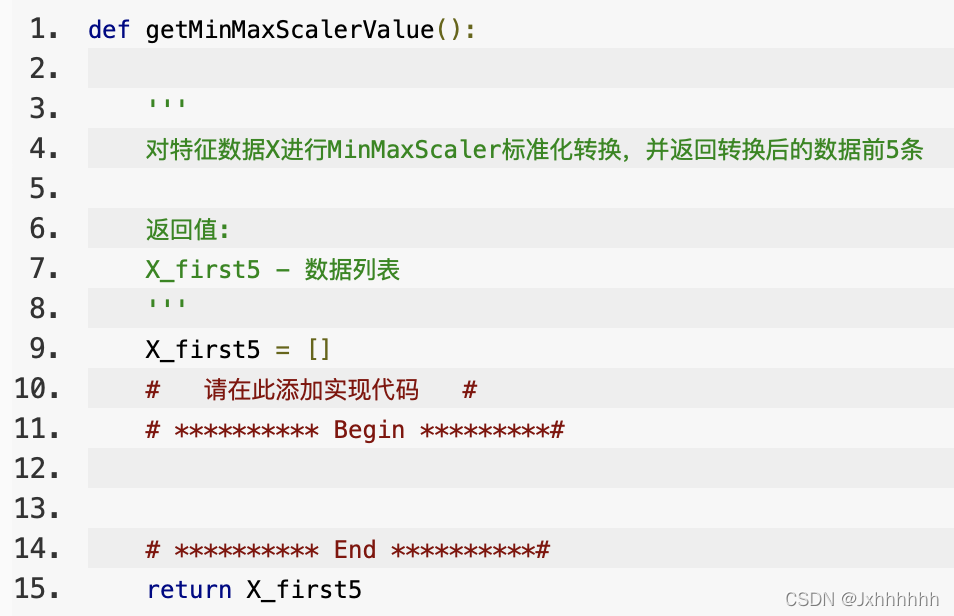

- 1.使用 MinMaxScaler 对特征数据 X 进行标准化转换,并返回转换后的特征数据的前 5 条;

要补充的代码块如下:

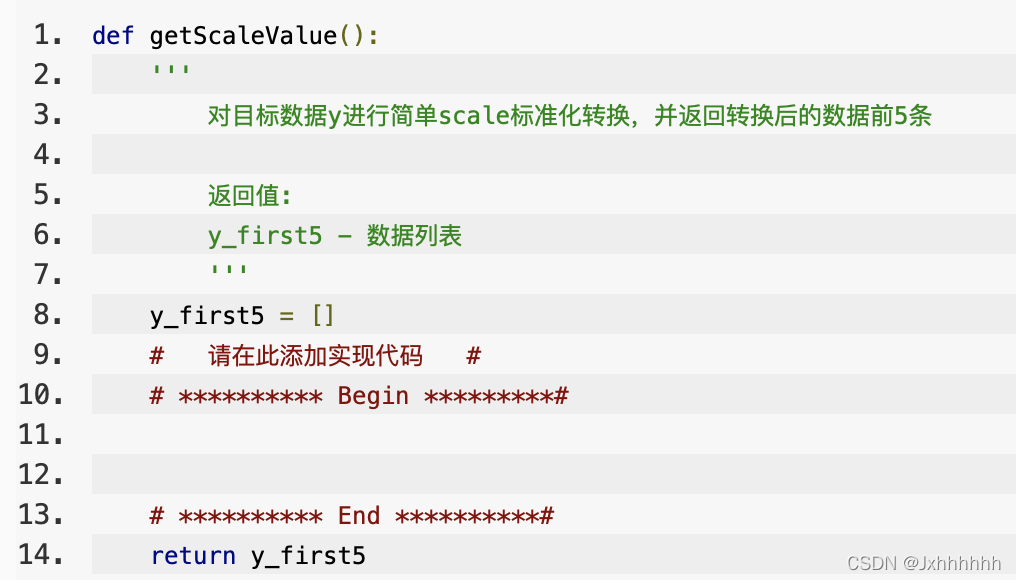

- 2.使用 scale 对目标数据 y 进行标准化转换,并返回转换后的前 5 条数据;

要补充的代码块如下:

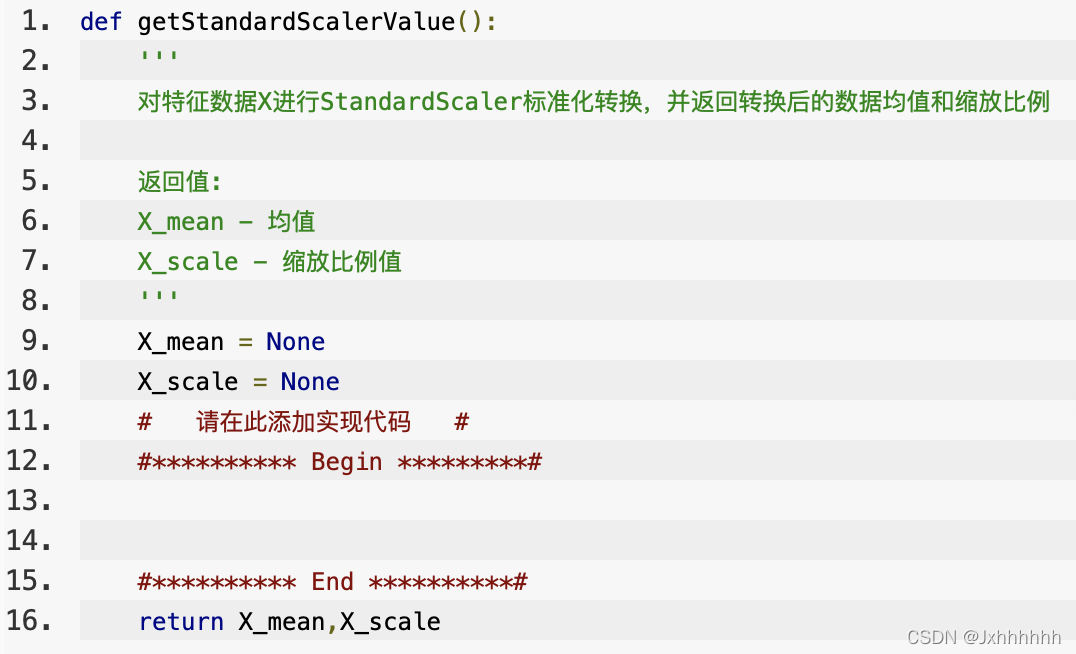

- 3.使用 StandardScaler 对特征数据 X 的进行标准化转换,并返回转换后的均值和缩放比例值。

要补充的代码块如下:

代码如下:

from sklearn.preprocessing import MinMaxScaler

from sklearn.preprocessing import scale

from sklearn.preprocessing import StandardScaler

from sklearn.datasets import fetch_california_housing

'''

Data descrption:

The data contains 20,640 observations on 9 variables.

This dataset contains the average house value as target variable

and the following input variables (features): average income,

housing average age, average rooms, average bedrooms, population,

average occupation, latitude, and longitude in that order.

dataset : dict-like object with the following attributes:

dataset.data : ndarray, shape [20640, 8]

Each row corresponding to the 8 feature values in order.

dataset.target : numpy array of shape (20640,)

Each value corresponds to the average house value in units of 100,000.

dataset.feature_names : array of length 8

Array of ordered feature names used in the dataset.

dataset.DESCR : string

Description of the California housing dataset.

'''

dataset = fetch_california_housing("./step4/")

X_full, y = dataset.data, dataset.target

#抽取其中两个特征数据

X = X_full[:, [0, 5]]

def getMinMaxScalerValue():

'''

对特征数据X进行MinMaxScaler标准化转换,并返回转换后的数据前5条

返回值:

X_first5 - 数据列表

'''

X_first5 = []

# 请在此添加实现代码 #

# ********** Begin *********#

min_max=MinMaxScaler()

X_first5=min_max.fit_transform(X)[:5]

# ********** End **********#

return X_first5

def getScaleValue():

'''

对目标数据y进行简单scale标准化转换,并返回转换后的数据前5条

返回值:

y_first5 - 数据列表

'''

y_first5 = []

# 请在此添加实现代码 #

# ********** Begin *********#

y_first5=scale(y)[:5]

# ********** End **********#

return y_first5

def getStandardScalerValue():

'''

对特征数据X进行StandardScaler标准化转换,并返回转换后的数据均值和缩放比例

返回值:

X_mean - 均值

X_scale - 缩放比例值

'''

X_mean = None

X_scale = None

# 请在此添加实现代码 #

#********** Begin *********#

a=StandardScaler().fit(X)

X_mean=a.mean_

X_scale=a.scale_

#********** End **********#

return X_mean,X_scale

第3关:文本数据特征提取

编程要求:

本关任务,希望分别使用 CountVectorizer 和 TfidfVectorizer 对新闻文本数据进行特征提取,并验证提取是否正确。

数据介绍:

采用fetch_20newsgroups(“./step5/”,subset=‘train’, categories=categories)函数加载对应目录的新闻数据,subset='train’指定采用的是该数据的训练集,categories 指定新闻目录,具体的函数调用可参考官方文档fetch_20newsgroups。X 是一个 string 数组,包含 857 个新闻文档。

本关具体分成为几个子任务:

1.使用 CountVectorizer 方法提取新闻数据的特征向量,返回词汇表大小和前五条特征向量;

要补充的代码块如下:

def transfer2CountVector():

'''

使用CountVectorizer方法提取特征向量,返回词汇表大小和前五条特征向量

返回值:

vocab_len - 标量,词汇表大小

tokenizer_list - 数组,对测试字符串test_str进行分词后的结果

'''

vocab_len = 0

test_str = "what's your favorite programming language?"

tokenizer_list = []

# 请在此添加实现代码 #

# ********** Begin *********#

# ********** End **********#

return vocab_len,tokenizer_list

2.使用 TfidfVectorizer 方法得到新闻数据的词汇-特征向量映射关系,指定使用内建的英文停止词列表作为停止词,并且词出现的最小频数等于 2。然后,将向量化转换器应用到新的测试数据。

def transfer2TfidfVector():

'''

使用TfidfVectorizer方法提取特征向量,并将向量化转换器应用到新的测试数据

TfidfVectorizer()方法的参数设置:

min_df = 2,stop_words="english"

test_data - 需要转换的原数据

返回值:

transfer_test_data - 二维数组ndarray

'''

test_data = ['Once again, to not believe in God is different than saying....... where is your evidence for that "god is" is meaningful at some level?\n Benedikt\n']

transfer_test_data = None

# 请在此添加实现代码 #

# ********** Begin *********#

# ********** End **********#

return transfer_test_data

代码如下:

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.feature_extraction.text import TfidfVectorizer

categories = [

'alt.atheism',

'talk.religion.misc',

]

# 加载对应目录的新闻数据,包含857 个文档

data = fetch_20newsgroups("./step5/",subset='train', categories=categories)

X = data.data

def transfer2CountVector():

'''

使用CountVectorizer方法提取特征向量,返回词汇表大小和前五条特征向量

返回值:

vocab_len - 标量,词汇表大小

tokenizer_list - 数组,对测试字符串test_str进行分词后的结果

'''

vocab_len = 0

test_str = "what's your favorite programming language?"

tokenizer_list = []

#Begin *********

v=CountVectorizer()

v.fit(X)

vocab_len=len(v.vocabulary_)

jxh=v.build_analyzer()

tokenizer_list=jxh(test_str)

# 请在此添加实现代码 #

# **********

# ********** End **********#

return vocab_len,tokenizer_list

def transfer2TfidfVector():

'''

使用TfidfVectorizer方法提取特征向量,并将向量化转换器应用到新的测试数据

TfidfVectorizer()方法的参数设置:

min_df = 2,stop_words="english"

test_data - 需要转换的原数据

返回值:

transfer_test_data - 二维数组ndarray

'''

test_data = ['Once again, to not believe in God is different than saying\n>I BELIEVE that God does not exist. I still maintain the position, even\n>after reading the FAQs, that strong atheism requires faith.\n>\n \nNo it in the way it is usually used. In my view, you are saying here that\ndriving a car requires faith that the car drives.\n \nFor me it is a conclusion, and I have no more faith in it than I have in the\npremises and the argument used.\n \n \n>But first let me say the following.\n>We might have a language problem here - in regards to "faith" and\n>"existence". I, as a Christian, maintain that God does not exist.\n>To exist means to have being in space and time. God does not HAVE\n>being - God IS Being. Kierkegaard once said that God does not\n>exist, He is eternal. With this said, I feel it\'s rather pointless\n>to debate the so called "existence" of God - and that is not what\n>I\'m doing here. I believe that God is the source and ground of\n>being. When you say that "god does not exist", I also accept this\n>statement - but we obviously mean two different things by it. However,\n>in what follows I will use the phrase "the existence of God" in it\'s\n>\'usual sense\' - and this is the sense that I think you are using it.\n>I would like a clarification upon what you mean by "the existence of\n>God".\n>\n \nNo, that\'s a word game. The term god is used in a different way usually.\nWhen you use a different definition it is your thing, but until it is\ncommonly accepted you would have to say the way I define god is ... and\nthat does not exist, it is existence itself, so I say it does not exist.\n \nInterestingly, there are those who say that "existence exists" is one of\nthe indubitable statements possible.\n \nFurther, saying god is existence is either a waste of time, existence is\nalready used and there is no need to replace it by god, or you are implying\nmore with it, in which case your definition and your argument so far\nare incomplete, making it a fallacy.\n \n \n(Deletion)\n>One can never prove that God does or does not exist. When you say\n>that you believe God does not exist, and that this is an opinion\n>"based upon observation", I will have to ask "what observtions are\n>you refering to?" There are NO observations - pro or con - that\n>are valid here in establishing a POSITIVE belief.\n(Deletion)\n \nWhere does that follow? Aren\'t observations based on the assumption\nthat something exists?\n \nAnd wouldn\'t you say there is a level of definition that the assumption\n"god is" is meaningful. If not, I would reject that concept anyway.\n \nSo, where is your evidence for that "god is" is meaningful at some level?\n Benedikt\n']

transfer_test_data = None

# 请在此添加实现代码 #

# ********** Begin *********#

v=TfidfVectorizer(min_df=2,stop_words="english")

jxh=v.fit_transform(X)

#v.get_feature_names()

#jxh.toarray()

transfer_test_data=v.transform(test_data).toarray()

# ********** End **********#

return transfer_test_data

第4关:使用scikit-learn导入数据集

编程要求:

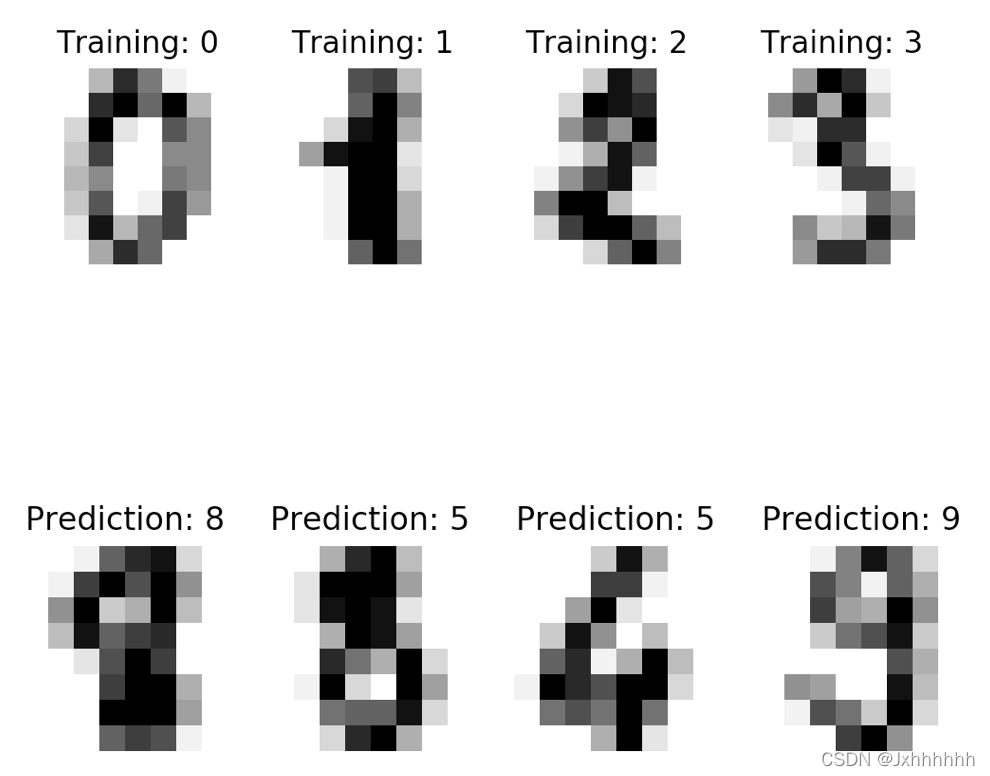

本关要求采用 scikit-learn 中的 svm 模型,训练一个对 digits 数据集进行分类的模型。训练集是 digits 数据集的前半部分数据,测试集是 digits 数据集的后半部分数据。

根据要求,补全右侧编辑器Begin-End区间的代码。

希望通过训练集,训练出分类模型,对于测试集数据能正确预测出图片对应的数字(0…9)。

本关需编程实现step2/digitsClassification.py 的createModelandPredict()函数创建分类模型并预测:

predicted 是模型对测试数据的预测值,该函数会返回预测值,并在测试文件中检测是否正确。

另外,step2/digitsClassification.py代码中包含两个显示样本图像的函数分别是show4TrainImage()和show4TestImage(),分别用来显示训练集的前 4 张图像,和模型预测值的前 4 张图像,大家可以通过在本机 Python 环境调用这两个函数直观查看原始样本集,并且验证预测是否正确,如下图所示:

代码如下:

import matplotlib.pyplot as plt

# 导入数据集,分类器相关包

from sklearn import datasets, svm, metrics

# 导入digits数据集

digits = datasets.load_digits()

n_samples = len(digits.data)

data = digits.data

# 使用前一半的数据集作为训练数据,后一半数据集作为测试数据

train_data,train_target = data[:n_samples // 2],digits.target[:n_samples // 2]

test_data,test_target = data[n_samples // 2:],digits.target[n_samples // 2:]

def createModelandPredict():

'''

创建分类模型并对测试数据预测

返回值:

predicted - 测试数据预测分类值

'''

predicted = None

# 请在此添加实现代码 #

#********** Begin *********#

classifier=svm.SVC()

classifier.fit(train_data,train_target)

predicted=classifier.predict(test_data)

#********** End **********#

return predicted

更多推荐

已为社区贡献4条内容

已为社区贡献4条内容

所有评论(0)