转载:k8s在pod内无法ping通servicename和ClusterIP

转载自:k8s在pod内无法ping通servicename和ClusterIPiptables与IPVS对比Iptables:灵活,功能强大规则遍历匹配和更新,呈线性时延IPVS:工作在内核态,有更好的性能调度算法丰富:rr,wrr,lc,wlc,ip hash…生产环境推荐使用IPVS①、进入容器内部测试ping ClusterIP:无法ping通coder@user1-container10

转载自:k8s在pod内无法ping通servicename和ClusterIP

iptables与IPVS对比

Iptables:

灵活,功能强大

规则遍历匹配和更新,呈线性时延

IPVS:

工作在内核态,有更好的性能

调度算法丰富:rr,wrr,lc,wlc,ip hash…

生产环境推荐使用IPVS

①、进入容器内部测试

ping ClusterIP:无法ping通

coder@user1-container10-79754b6fcd-vmrhp:~/project$ ping 10.103.84.93

PING 10.103.84.93 (10.103.84.93) 56(84) bytes of data.

^C

--- 10.103.84.93 ping statistics ---

12 packets transmitted, 0 received, 100% packet loss, time 11244ms

②、ping servicename:无法ping通

coder@user1-container10-79754b6fcd-vmrhp:~/project$ ping user1-container0

PING user1-container0.iblockchain.svc.cluster.local (10.107.200.99) 56(84) bytes of data.

^C

--- user1-container0.iblockchain.svc.cluster.local ping statistics ---

69 packets transmitted, 0 received, 100% packet loss, time 69610ms

查看kube-proxy日志

k8s@master:~$ kubectl logs -n kube-system kube-proxy-6lw4d

W0521 14:10:15.618034 1 server_others.go:295] Flag proxy-mode="" unknown, assuming iptables proxy

I0521 14:10:15.640642 1 server_others.go:148] Using iptables Proxier.

I0521 14:10:15.640845 1 server_others.go:178] Tearing down inactive rules.

E0521 14:10:15.690628 1 proxier.go:583] Error removing iptables rules in ipvs proxier: error deleting chain "KUBE-MARK-MASQ": exit status 1: iptables: Too many links.

I0521 14:10:16.294665 1 server.go:555] Version: v1.14.0

I0521 14:10:16.307621 1 conntrack.go:52] Setting nf_conntrack_max to 1310720

I0521 14:10:16.308167 1 config.go:102] Starting endpoints config controller

I0521 14:10:16.308247 1 controller_utils.go:1027] Waiting for caches to sync for endpoints config controller

I0521 14:10:16.308300 1 config.go:202] Starting service config controller

I0521 14:10:16.308339 1 controller_utils.go:1027] Waiting for caches to sync for service config controller

I0521 14:10:16.408477 1 controller_utils.go:1034] Caches are synced for endpoints config controller

I0521 14:10:16.408477 1 controller_utils.go:1034] Caches are synced for service config controller

原因:kube-proxy使用了iptable模式,修改为ipvs模式则可以在pod内ping通clusterIP或servicename

解决办法:

查看kube-proxy configMapkubectl get cm kube-proxy -n kube-system -o yaml

发现执行命令后输出的mode: “”

k8s@master:~$ kubectl get cm kube-proxy -n kube-system -o yaml

apiVersion: v1

data:

config.conf: |-

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

clientConnection:

acceptContentTypes: ""

burst: 10

contentType: application/vnd.kubernetes.protobuf

kubeconfig: /var/lib/kube-proxy/kubeconfig.conf

qps: 5

clusterCIDR: 10.244.0.0/16

configSyncPeriod: 15m0s

conntrack:

max: null

maxPerCore: 32768

min: 131072

tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

syncPeriod: 30s

ipvs:

excludeCIDRs: null

minSyncPeriod: 0s

scheduler: ""

syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249

mode: ""

nodePortAddresses: null

oomScoreAdj: -999

portRange: ""

resourceContainer: /kube-proxy

udpIdleTimeout: 250ms

winkernel:

enableDSR: false

networkName: ""

sourceVip: ""

kubeconfig.conf: |-

apiVersion: v1

kind: Config

clusters:

- cluster:

certificate-authority: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

server: https://172.18.167.38:6443

name: default

contexts:

- context:

cluster: default

namespace: default

user: default

name: default

current-context: default

users:

- name: default

user:

tokenFile: /var/run/secrets/kubernetes.io/serviceaccount/token

kind: ConfigMap

metadata:

creationTimestamp: "2020-05-02T04:00:39Z"

labels:

app: kube-proxy

name: kube-proxy

namespace: kube-system

resourceVersion: "231"

selfLink: /api/v1/namespaces/kube-system/configmaps/kube-proxy

uid: 7bfe9acc-8c29-11ea-bf4b-be066b02b900

编辑kube-proxy configMap,修改mode为ipvskubectl edit cm kube-proxy -n kube-system

...

iptables:

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

syncPeriod: 30s

ipvs:

excludeCIDRs: null

minSyncPeriod: 0s

scheduler: ""

syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249

mode: "ipvs"

...

使用ipvs需要执行以下操作

开启ipvs支持

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

k8s@master:~$ sudo chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

ip_vs_sh 16384 0

ip_vs_wrr 16384 0

ip_vs_rr 16384 0

ip_vs 151552 6 ip_vs_rr,ip_vs_sh,ip_vs_wrr

nf_defrag_ipv6 20480 2 nf_conntrack_ipv6,ip_vs

nf_conntrack_ipv4 16384 47

nf_defrag_ipv4 16384 1 nf_conntrack_ipv4

nf_conntrack 131072 12 xt_conntrack,nf_nat_masquerade_ipv4,nf_conntrack_ipv6,nf_conntrack_ipv4,nf_nat,nf_nat_ipv6,ipt_MASQUERADE,nf_nat_ipv4,xt_nat,nf_conntrack_netlink,ip_vs,xt_REDIRECT

libcrc32c 16384 4 nf_conntrack,nf_nat,xfs,ip_vs

重启kube-proxy

k8s@master:~$ kubectl get pod -n kube-system | grep kube-proxy |awk '{system("kubectl delete pod "$1" -n kube-system")}'

pod "kube-proxy-6lw4d" deleted

查看kube-proxy日志

k8s@master:~$ kubectl get pod -n kube-system |grep kube-proxy

kube-proxy-7sxpv 1/1 Running 0 86s

k8s@master:~$ kubectl logs -n kube-system kube-proxy-7sxpv

I0522 15:41:32.391309 1 server_others.go:189] Using ipvs Proxier.

W0522 15:41:32.391855 1 proxier.go:381] IPVS scheduler not specified, use rr by default

I0522 15:41:32.391999 1 server_others.go:216] Tearing down inactive rules.

I0522 15:41:32.477963 1 server.go:555] Version: v1.14.0

I0522 15:41:32.490131 1 conntrack.go:52] Setting nf_conntrack_max to 1310720

I0522 15:41:32.490544 1 config.go:102] Starting endpoints config controller

I0522 15:41:32.490604 1 controller_utils.go:1027] Waiting for caches to sync for endpoints config controller

I0522 15:41:32.490561 1 config.go:202] Starting service config controller

I0522 15:41:32.490659 1 controller_utils.go:1027] Waiting for caches to sync for service config controller

I0522 15:41:32.590799 1 controller_utils.go:1034] Caches are synced for endpoints config controller

I0522 15:41:32.590894 1 controller_utils.go:1034] Caches are synced for service config controller

至此问题解决

再次进入Pod容器内部进行

测试查看svc

k8s@master:~$ kubectl get svc -n iblockchain

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mysql ClusterIP 10.103.84.93 <none> 3306/TCP 15d

user1-container0 ClusterIP 10.107.200.99 <none> 80/TCP 6d21h

user1-container10 ClusterIP 10.110.35.111 <none> 80/TCP 6d13h

user1-container12 ClusterIP 10.97.251.199 <none> 80/TCP 5d13h

user1-container13 ClusterIP 10.98.144.124 <none> 80/TCP 5d13h

webapp NodePort 10.102.63.171 <none> 80:30000/TCP 6d15h

进入pod ping servicename

coder@user1-container10-79754b6fcd-vmrhp:~/project$ ping user1-container0

PING user1-container0.iblockchain.svc.cluster.local (10.107.200.99) 56(84) bytes of data.

64 bytes from user1-container0.iblockchain.svc.cluster.local (10.107.200.99): icmp_seq=1 ttl=64 time=0.102 ms

64 bytes from user1-container0.iblockchain.svc.cluster.local (10.107.200.99): icmp_seq=2 ttl=64 time=0.091 ms

64 bytes from user1-container0.iblockchain.svc.cluster.local (10.107.200.99): icmp_seq=3 ttl=64 time=0.074 ms

这里有个小坑,若两个pod处于不同的命名空间,ping servicename需要输入完整的dns,如:

k8s@master:~$ kubectl get svc -A

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

admin dl1588401175722-tensorflow-cpu ClusterIP 10.99.220.121 <none> 8000/TCP,8080/TCP,6080/TCP 20d

admin dl1588401391658-tensorflow-cpu ClusterIP 10.102.167.253 <none> 8000/TCP,8080/TCP,6080/TCP 20d

admin dl1588401420047-tensorflow-gpu ClusterIP 10.109.168.48 <none> 8000/TCP,8080/TCP,6080/TCP 20d

admin dl1588698113367-tensorflow-cpu ClusterIP 10.97.78.194 <none> 8000/TCP,8080/TCP,6080/TCP 16d

default kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 20d

iblockchain mysql ClusterIP 10.103.84.93 <none> 3306/TCP 15d

iblockchain user1-container0 ClusterIP 10.107.200.99 <none> 80/TCP 6d21h

iblockchain user1-container10 ClusterIP 10.110.35.111 <none> 80/TCP 6d13h

iblockchain user1-container12 ClusterIP 10.97.251.199 <none> 80/TCP 5d13h

iblockchain user1-container13 ClusterIP 10.98.144.124 <none> 80/TCP 5d13h

iblockchain webapp NodePort 10.102.63.171 <none> 80:30000/TCP 6d15h

kube-system kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 20d

servicename:dl1588401175722-tensorflow-cpu

coder@user1-container10-79754b6fcd-vmrhp:~/project$ ping dl1588401175722-tensorflow-cpu

ping: dl1588401175722-tensorflow-cpu: Name or service not known

完整DNS:dl1588401175722-tensorflow-cpu.admin.svc.cluster.local

coder@user1-container10-79754b6fcd-vmrhp:~/project$ ping dl1588401175722-tensorflow-cpu.admin.svc.cluster.local

PING dl1588401175722-tensorflow-cpu.admin.svc.cluster.local (10.99.220.121) 56(84) bytes of data.

64 bytes from dl1588401175722-tensorflow-cpu.admin.svc.cluster.local (10.99.220.121): icmp_seq=1 ttl=64 time=0.074 ms

64 bytes from dl1588401175722-tensorflow-cpu.admin.svc.cluster.local (10.99.220.121): icmp_seq=2 ttl=64 time=0.094 ms

64 bytes from dl1588401175722-tensorflow-cpu.admin.svc.cluster.local (10.99.220.121): icmp_seq=3 ttl=64 time=0.116 ms

64 bytes from dl1588401175722-tensorflow-cpu.admin.svc.cluster.local (10.99.220.121): icmp_seq=4 ttl=64 time=0.127 ms

为什么K8S使用iptables无法ping通svc

参考一:https://www.yoyoask.com/?p=4742

参考二:https://blog.csdn.net/sinat_20184565/article/details/102410231

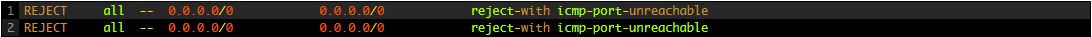

iptable默认策略为拒绝任何icmp端口

除非你手动打开让其支持icmp协议才可以ping通

IPVS

而IPVS中的ICMP报文处理-由外到内,默认是支持的,所以可以ping通

更多推荐

已为社区贡献7条内容

已为社区贡献7条内容

所有评论(0)